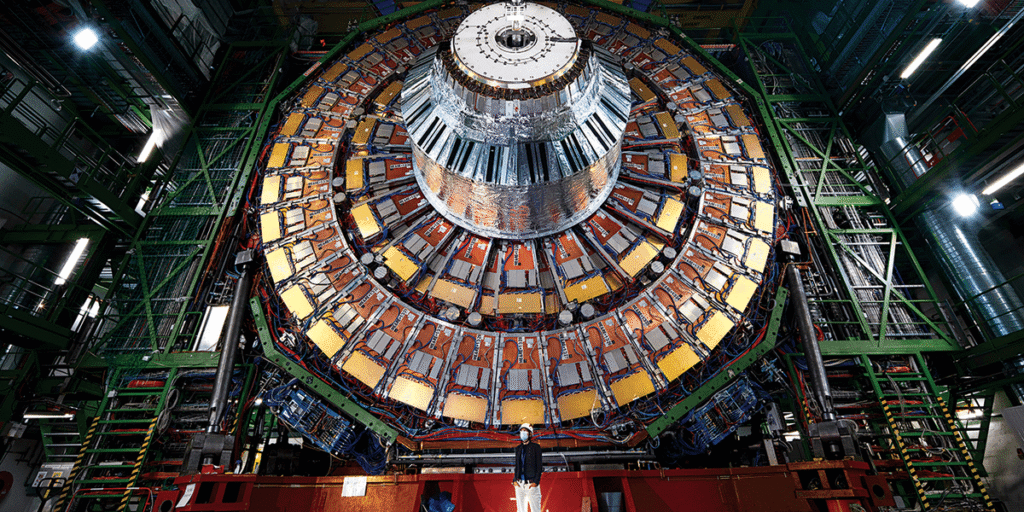

Within the time it takes you to learn this sentence, the Large Hadron Collider (LHC) may have smashed billions of particles collectively. In all probability, it’ll have discovered precisely what it discovered yesterday: extra proof to assist the Standard Model of particle physics.

For the engineers who constructed this 27-kilometer-long ring, this consistency is a triumph. However for theoretical physicists, it has been fairly irritating. As Matthew Hutson studies in “AI Hunts for the Next Big Thing in Physics,” the sphere is at the moment gripped by a quiet disaster. In an electronic mail discussing his reporting, Hutson explains that the Customary Mannequin, which describes the identified elementary particles and forces, is just not an entire image. “So theorists have proposed new concepts, and experimentalists have constructed large services to check them, however regardless of the gobs of knowledge, there have been no large breakthroughs,” Hutson says. “There are key parts of actuality we’re utterly lacking.”

That’s why researchers are turning artificial intelligence free on particle physics. They aren’t merely asking AI to comb by accelerator information to substantiate present theories, Hutson explains. They’re asking AI to level the way in which towards theories that they’ve by no means imagined. “As a substitute of seeking to assist theories that people have generated,” he says, “unsupervised AI can spotlight something out of the strange, increasing our attain into unknown unknowns.” By asking AI to flag anomalies within the information, researchers hope to seek out their method to “new physics” that extends the Customary Mannequin.

On the floor, this text would possibly sound like one other “AI for X” story. As IEEE Spectrum’s AI editor, I get a gradual stream of pitches for such tales: AI for drug discovery, AI for farming, AI for wildlife monitoring. Usually what that actually means is quicker information processing or automation across the edges. Helpful, positive, however incremental.

What struck me in Hutson’s reporting is that this effort feels totally different. As a substitute of analyzing experimental information after the actual fact, the AI basically turns into a part of the instrument, scanning for refined patterns and deciding in actual time what’s attention-grabbing. On the LHC, detectors document 40 million collisions per second. There’s merely no method to protect all that information, so engineers have all the time needed to construct filters to resolve which occasions get saved for evaluation and that are discarded; practically every little thing is thrown away.

Now these split-second selections are more and more handed to machine learning techniques working on field-programmable gate arrays (FPGAs) linked to the detectors. The code should run on the chip’s restricted logic and reminiscence, and compressing a neural community into that {hardware} isn’t straightforward. Hutson describes one theorist pleading with an engineer, “Which of my algorithms suits in your bloody FPGA?”

This second is a part of a a lot older sample. As Hutson writes within the article, new devices have opened doorways to the sudden all through the historical past of science. Galileo’s telescope revealed moons circling Jupiter. Early microscopes uncovered whole worlds of “animalcules” swimming round. These higher instruments didn’t simply reply present questions; they made it attainable to ask new ones.

If there’s a disaster in particle physics, in different phrases, it could not simply be about lacking particles. It’s about the way to look past the boundaries of the human creativeness. Hutson’s story means that AI won’t resolve the mysteries of the universe outright, but it surely might change how we seek for solutions.

From Your Website Articles

Associated Articles Across the Net