What’s the distinction between a silly concept and a superb one? Generally, it simply comes right down to sources. Virtually limitless funds, like limitless thrust, can get even a mad concept off the bottom.

And so it is perhaps for the idea of placing AI data centers in orbit. In a uncommon second of unalloyed settlement, among the richest and strongest males in know-how are staunchly backing the concept. The group contains Elon Musk, Jeff Bezos, Jensen Huang, Sam Altman, and Google CEO Sundar Pichai. In all chance, a whole bunch of individuals at the moment are engaged on the idea of house information facilities on the companies immediately or not directly managed by these males—SpaceX, Starlink, Tesla, Amazon, Blue Origin, Nvidia, OpenAI, and Google, amongst others.

Seemingly prices to design, construct, and launch a 1-GW orbital datacenter, based mostly on a community of some 4,300 satellites and together with working prices over a five-year interval, would exceed US $50 billion. That’s about thrice the price of a 1-GW information heart on Earth, together with 5 years of operation.John MacNeill

So how a lot would it not price to start out coaching large language models in house? In all probability the very best accounting is one created by aerospace engineer Andrew McCalip. McCalip’s exhaustive, detailed evaluation contains interactive sliders that allow you to evaluate prices for space-based and terrestrial information facilities within the vary of 1 to 100 gigawatts. One-gigawatt data centers are being built now on terra firma, and Meta has introduced plans for a 5-GW facility, with anticipated completion a while after 2030.

In an interview, McCalip says his preliminary tough calculations a number of years in the past steered that information facilities in house would price within the vary of seven to 10 occasions extra, per gigawatt of capability, than their terrestrial counterparts. “It simply wasn’t sensible,” he says. “Not even shut.” However when Elon Musk started publicly backing the concept, McCalip revisited the numbers utilizing publicly out there details about Starlink’s and Tesla’s applied sciences and capabilities.

That modified the image considerably. The figures in his on-line evaluation assume an orbital community of data-center satellites that borrows closely from Musk’s tech treasure chest—“primarily…you simply begin placing some radiation-resistant ASIC chips on the Starlink fleet and also you begin rising edge capability organically on the Starlink fleet,” McCalip says. The community would depend on the type of watt-efficient GPU architecture utilized in Teslas for self-driving, he provides. “You begin dropping these onto the backs of Starlinks. You’ll be able to slowly develop this out, and this may be roughly the efficiency that you’d get.”

Backside line, with some stable however not essentially heroic engineering, the price of an orbital information heart could possibly be as little as thrice that of the comparable terrestrial one. That differential, whereas nonetheless excessive, a minimum of nudges the idea out of the immediately dismissible class. “I’ve my specific views, however I would like the info to talk for itself,” McCalip says.

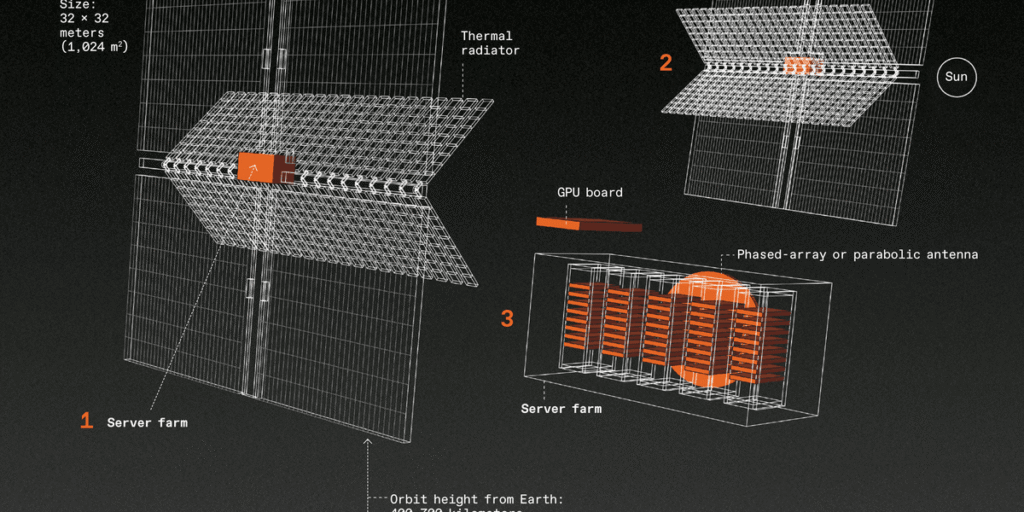

For this illustration, we picked a configuration with an combination 1 GW of capability. The community would include some 4,300 satellites, every of which might be outfitted with a 1,024-square-meter photo voltaic array that generates 250 kilowatts. The info heart on that satellite tv for pc, powered by the array, may need a minimum of 175 GPUs; McCalip notes {that a} standard GPU rack, Nvidia’s NVL72, has 72 GPUs and requires 120 to 140 kW.

The entire price of the satellite tv for pc community can be round US $51 billion, together with launch and 5 years of operational bills; a comparable terrestrial system would price about $16 billion over the identical interval.

Silly? Not silly? You resolve.

From Your Website Articles

Associated Articles Across the Net